Mon-Fri, 9:00-17:00 (Beijing Time, UTC+8)

Mon-Fri, 9:00-17:00 (Beijing Time, UTC+8)Frontier Insights

We are dedicated to advancing the technology industry and sharing expertise in technical, business, and cultural domains.

We are dedicated to advancing the technology industry and sharing expertise in technical, business, and cultural domains.

BMS DXP: Driving 2026 SEO and GEO Convergent Growth

Publication date: January 21, 2026

I. Industry Trend Insight: The Inflection Point of SEO and GEO Convergence in 2026

Entering 2026, overseas growth pathways for Chinese B2B manufacturing enterprises are undergoing a silent yet profound paradigm shift. The era of relying solely on single search engine rankings to acquire traffic is coming to an end. A new, more challenging "dual-track" search era has arrived.

Entering 2026, overseas growth pathways for Chinese B2B manufacturing enterprises are undergoing a silent yet profound paradigm shift. The era of relying solely on single search engine rankings to acquire traffic is coming to an end. A new, more challenging "dual-track" search era has arrived.

(1) Duality of Search Entry Points: From "Human Crawlers" to "AI Agents"

Traditional search engines such as Google and Bing—the core battlegrounds of SEO optimization—still hold significant importance. However, new traffic channels have emerged with force. Today, it's not only Google that needs to read your website; AI agents like ChatGPT, Claude, and Perplexity also need to efficiently understand your content so they can accurately reference your brand information within AI-generated responses. This means that a company’s content visibility no longer depends solely on traditional search engine rankings, but increasingly on whether it can be discovered, understood, and trusted by large language models (LLMs).

(2) Common Growth Challenges for Enterprises: Why You Remain "Invisible"

Many manufacturing companies investing in overseas marketing are not inactive—they are simply applying outdated methods to new environments, commonly falling into the following traps:

1. Content Systems Disconnected from AI: Website content remains outdated, fragmented, and unstructured, lacking clear professional knowledge, verifiable industry information, and continuously updated authoritative sources that AI can comprehend and cite. If AI cannot "trust" you, it naturally won’t recommend you.

2. Fragmented Content Assets: Product information, technical documents, images, and videos are scattered across different platforms, preventing the formation of a unified, coherent perception of expertise and authority (E-E-A-T) recognizable by both search engines and AI.

3. Visibility Black Hole: Companies are completely unable to monitor their presence in AI-powered searches (e.g., recommendations from ChatGPT or citations in Google AI Overviews), placing them at an informational blind spot compared to competitors and rendering optimization efforts directionless.

(3) SEO and GEO: From Separation to Convergence

This marks the historic inflection point where SEO (Search Engine Optimization) and GEO (Generative Engine Optimization) begin to converge. Their core distinctions and interconnections are becoming increasingly clear:

• SEO: Focuses on optimizing a website’s ranking within traditional search engines (e.g., Google, Bing).

• GEO: Focuses on optimizing how often content is recommended and cited by generative AI engines (e.g., ChatGPT, Perplexity).

However, these two are not mutually exclusive but rather form a "dual-engine" system for overseas customer acquisition. Based on practices in mature overseas markets, the traffic structure is stabilizing as follows:

• Traditional Search (Google SEO): 50–60%

• Generative AI Search (GEO): 20–35%

• Brand / Community / Content Diffusion: 10–20%

Over 70% of predictable growth traffic fundamentally relies on "content quality × content coverage". Whether aiming for rankings on Google or citations in ChatGPT responses, high-quality, structured, and continuously updated content assets are essential.

(4) Synchronized Evolution of Technical Infrastructure

This convergence trend is directly driving the evolution of underlying technical standards. Traditional robots.txt and sitemap.xml were designed for search engine crawlers. In the age of AI search, to meet the efficient crawling demands of AI agents such as OpenAI’s GPTbot and Anthropic’s ClaudeBot, new technical standards like llms.txt have emerged. Dubbed the "Robots.txt for AI bots", llms.txt aims to help LLMs better understand a website’s structure and key content clearly and accurately.

(5) Key Characteristics of the 2026 Convergence Inflection Point

In summary, the 2026 SEO-GEO convergence inflection point exhibits the following distinct characteristics:

1. Diversified Entry Points: Traffic sources expand from a single search engine model to a dual-track model of "traditional search + AI search".

2. Unified Objectives: Optimization goals evolve from pursuing "rankings" to simultaneously achieving "rankings" and "AI citation".

3. Technological Synergy: Technical infrastructure must support both traditional XML Sitemaps and emerging AI-friendly protocols (e.g., llms.txt).

4. Systematic Strategy: Content strategy shifts from ad hoc, one-off publishing to building sustainable, machine-understandable industry knowledge systems.

Under this context, isolated optimization tools have become ineffective. Enterprises now require platform-level solutions capable of integrating content management, digital asset management, and conversion capabilities to support both SEO and GEO strategies. The BMS Digital Experience Platform (BMS DXP) is precisely the next-generation growth infrastructure built to address this convergence inflection point.

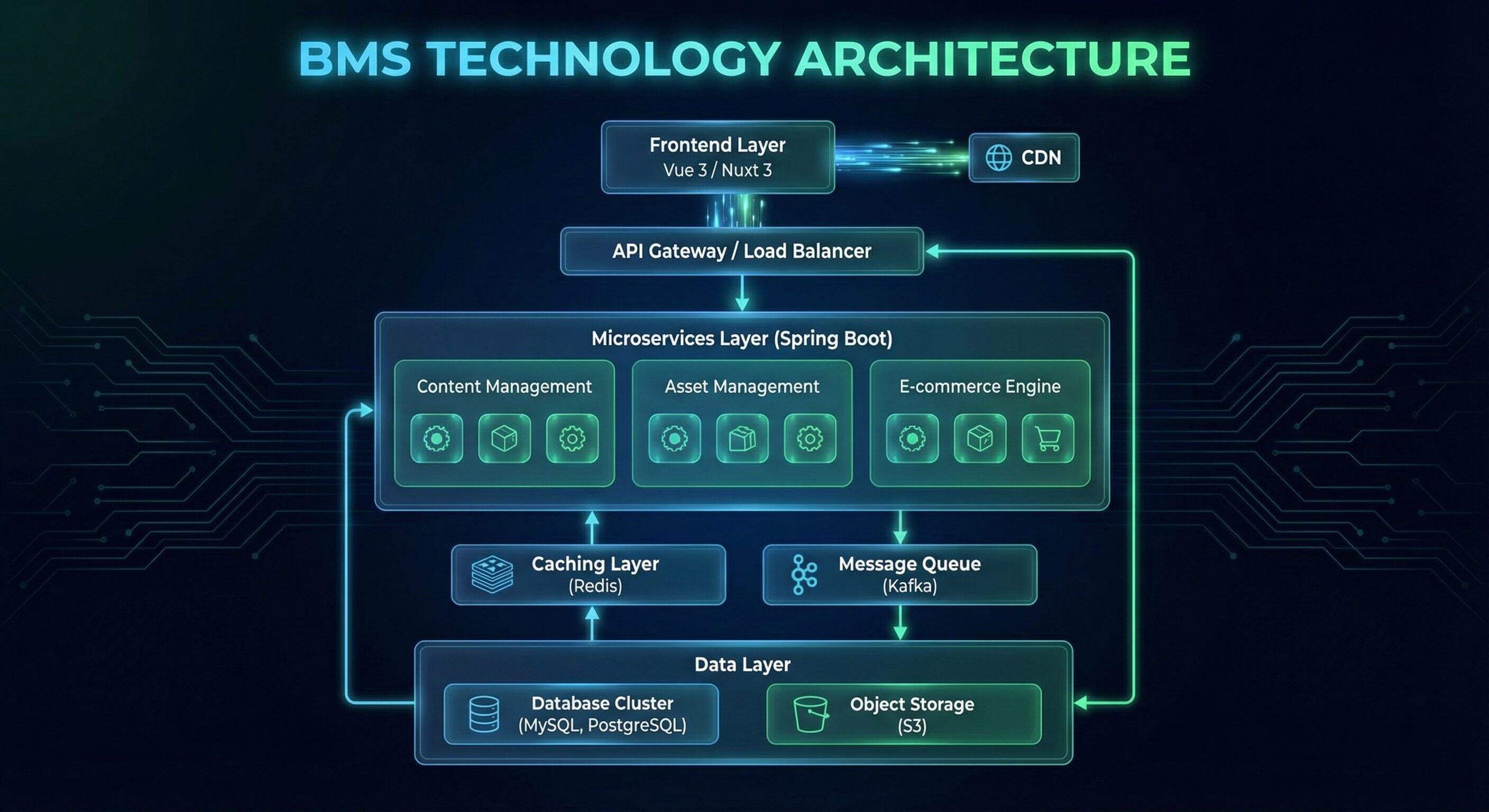

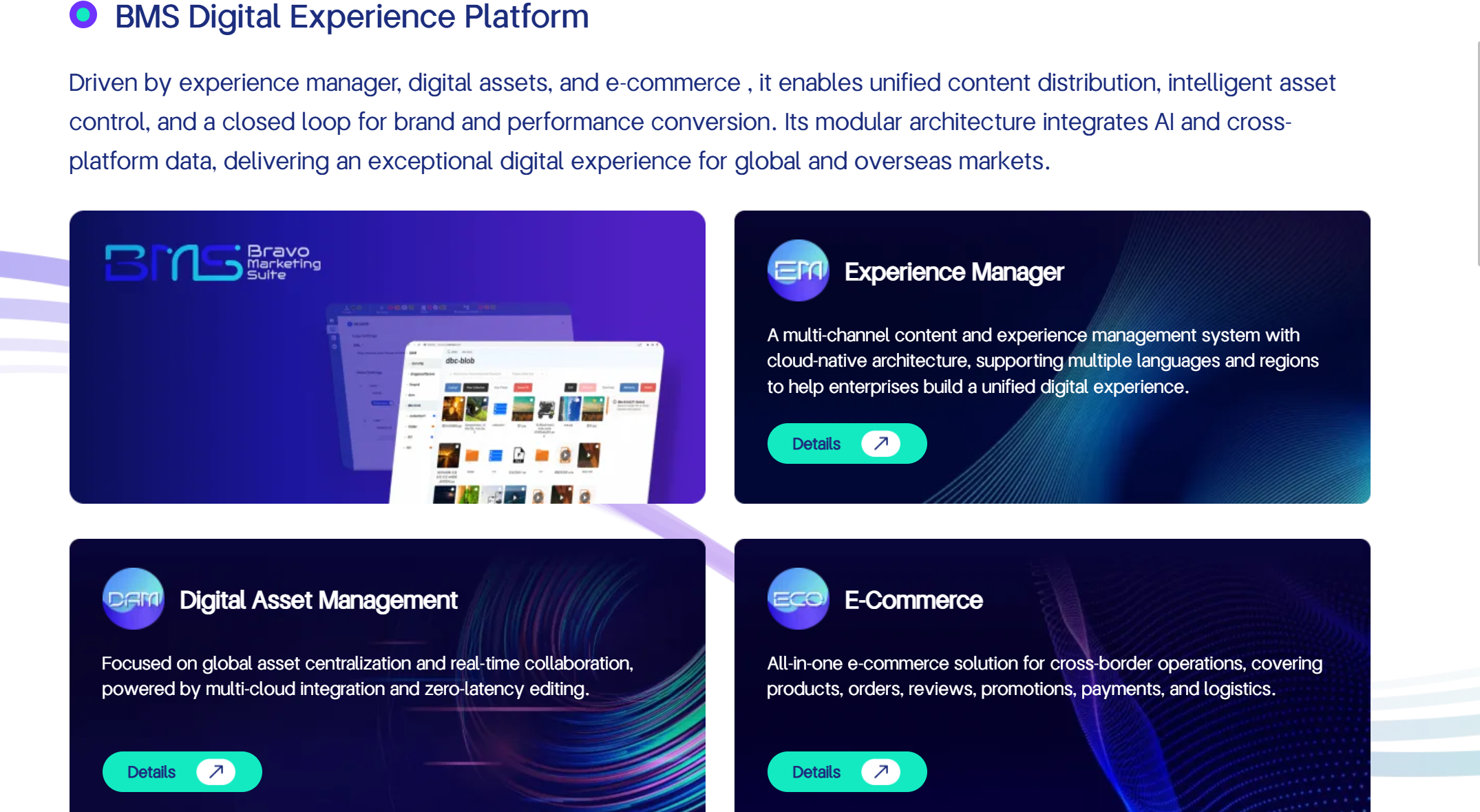

II. Technical Architecture of the BMS Digital Experience Platform

Faced with the certainty of growth demand driven by the 2026 SEO-GEO convergence, enterprises must upgrade from reliance on isolated tools to adopting platform-level solutions. BMS Digital Experience Platform (BMS DXP) is the "next-generation growth infrastructure" engineered specifically to meet this pivotal shift. Its technical architecture rests upon three core engines and proactively integrates AI-search-optimized infrastructure, aiming to systematically transform content into digital assets that are simultaneously recognized, trusted, and cited by both search engines and AI engines.

(1) Triple-Core Drive: Building an Integrated Growth Engine

BMS DXP is not merely a simple publishing tool. At its heart lies a systematic integration of content management, digital asset management, and e-commerce conversion—an engineering framework designed to "turn content into growth assets".

1. Experience Manager: Building a Knowledge Base for SEO+GEO

• Structured Content Production: Supports the creation of comprehensive industry knowledge systems covering complete user decision scenarios such as "selection, comparison, principles, applications, procurement". This structured content naturally aligns with the citation and Q&A logic of AI search (e.g., ChatGPT, Google AI Overview), laying a solid foundation for GEO optimization.

• Topic Cluster Strategy: The platform enables the creation of interconnected content networks (Topic Clusters). Instead of chasing individual keyword rankings in isolation, businesses build authoritative content clusters around core topics, systematically enhancing overall site expertise and trustworthiness (E-E-A-T)—a critical strategy beneficial for both traditional SEO and GEO.

2. Digital Asset Management: Strengthening Content Verifiability

• Unified Multi-Cloud Asset Management: Enables centralized management of technical documents, product images, application videos, inspection reports, and other digital assets stored across different cloud providers such as Alibaba Cloud, AWS, and Azure.

• Cross-Content Reusability: Digital assets seamlessly integrate with the content management core, ensuring the same technical white paper or product video can be reused across blogs, product pages, case studies, and more. This not only improves content production efficiency but also strengthens the site’s overall authority through consistent, verifiable materials.

3. E-Commerce: Closing the Loop from Content to Opportunity

This engine deeply integrates professional content, product data, and inquiry conversion pathways. When a technical blog post is recommended by an AI engine or gains traffic via SEO, visitors are smoothly guided to relevant product pages or inquiry forms, forming a complete conversion loop of "seen by AI/search → educated by content → generated inquiry", ultimately reducing long-term dependence on paid advertising.

(2) Automated Technical SEO: Zero-Latency Crawling and Indexing

To address tedious yet critical foundational work in technical SEO, BMS DXP renders these processes "invisible" through automation, ensuring search engine crawlers discover and understand website content with maximum efficiency.

1. Automatic Hierarchical Sitemap Generation and Indexing

• Problem Solved: For large websites with massive numbers of pages (e.g., over 50,000 URLs), manually creating and managing compliant Sitemap Index files and sub-files is extremely difficult.

• Platform Solution: BMS DXP intelligently detects site scale. When content volume exceeds thresholds, the system automatically generates a parent sitemap_index.xml file and intelligently splits URLs into multiple child files (e.g., sitemap-products-01.xml) based on content type or publication date—requiring no manual scripting or intervention.

2. Event-Driven Real-Time Sitemap Updates

• Problem Solved: In traditional CMS platforms, there is often a time lag between content publishing and Sitemap updates, causing delays in indexing new content.

• Platform Solution: BMS DXP uses an event-driven mechanism. When marketers publish or modify content, the system immediately injects the new URL into the appropriate Sitemap and updates the <lastmod> timestamp. When content is taken down, dead links are automatically removed from the Sitemap. This ensures search engine crawlers detect changes instantly, maximizing crawl budget utilization.

(3) AI-Ready Infrastructure: Seizing the Generative Search Gateway

To actively adapt to the AI search era, BMS DXP integrates LLM-focused optimization capabilities at the architectural level.

• Automatic Generation of llms.txt: Beyond traditional robots.txt and sitemap.xml, the platform pioneers automatic generation of llms.txt files tailored for AI agents (e.g., GPTbot, ClaudeBot). Dubbed the "Robots.txt for AI bots", this file helps LLMs crawl and understand a website’s core content more efficiently and accurately.

• Content Curation and Prioritization: The system can automatically extract Markdown summaries or plain text versions of high-value content (e.g., core product descriptions, white papers) and incorporate them into llms.txt. Administrators can define which content should be prioritized for AI recommendation, ensuring large models learn the most accurate and high-quality information from the enterprise, thereby increasing citation rates in AI-generated answers.

Through this technical architecture, BMS DXP provides enterprises with an integrated platform capable of concurrently maintaining traditional SEO (via automated Sitemap management) and emerging GEO (via AI-ready infrastructure). It ensures that regardless of how search technologies evolve, the enterprise’s content infrastructure remains optimally positioned, delivering foundational momentum for sustainable overseas growth.

III. SEO Optimization in Practice: From Sitemap to Full-Link Intelligent Optimization

If the BMS DXP platform constructed in the previous chapter is the "chassis" for future digital experiences, then how can platform capabilities be translated into quantifiable and sustainable growth tactics in the actual SEO battlefield? This is the core of the practical section: starting with basic automation of the sitemap, ultimately driving a full-link intelligent optimization closed loop that integrates content, technology, and conversion.

(I) Basic Technical SEO Automation: The Premise of Dual-Track Coverage

Basic technical SEO automation is the prerequisite for ensuring content visibility. In the technological environment of 2026, this is particularly reflected in the dual-track coverage of traditional search engines and AI search. Facing the challenges of content synchronization delays and indexing difficulties for large sites under traditional maintenance methods, as well as the risk of "falling behind" in the AI era, the BMS DXP content management module provides an intelligent solution. The platform achieves three key automations:

1. Breaking the Limit: Automatic Sitemap Hierarchical Structuring and Indexing – Addressing the strict limitations of search engines like Google on single XML Sitemap files (usually 50,000 URLs or 50MB), BMS DXP intelligently identifies the site size. When the content volume exceeds the limit, the system automatically generates a parent sitemap_index.xml file and splits the massive URLs into multiple sub-files based on content type or publication time, without manual intervention. This ensures that even if the website grows to millions of pages, the files submitted to search engines always comply with the specifications.

2. Defining "Real-Time": Sitemap Event-Driven Updates – Unlike traditional CMS systems that rely on scheduled tasks for rebuilding, BMS DXP establishes an event-driven update mechanism. When marketing personnel publish or modify content in the backend, the corresponding URL is injected into the Sitemap in real-time and the <lastmod> timestamp is updated. When a product is discontinued, the system can also automatically remove it from the Sitemap, preventing search engines from crawling 404 pages. This maximizes the search engine's crawling budget and significantly accelerates the indexing speed of new pages.

3. Laying the Foundation for the Future: Automatic llms.txt Generation – This is a core measure to respond to the AI search era. In addition to serving Google Bot's robots.txt and sitemap.xml, BMS DXP can automatically generate and maintain the llms.txt file, which is the AI robot version of the crawling guidelines. The system can automatically extract Markdown plain text summaries of core website content and allows administrators to select high-value content (such as core product descriptions and white papers) to be included. This content is then actively "fed" to AI agents such as OpenAI's GPTbot and Anthropic's ClaudeBot, seizing the traffic entry point after AI search generation.

(II) Full-Link Intelligence: A Closed Loop from Crawling to Conversion

However, having the right "map" is only the first step; more importantly, it's the depth and conversion power of the content after it's "seen." Technical SEO automation ensures that crawlers and AI can efficiently crawl the content, while full-link intelligence determines what they crawl and what users ultimately accomplish. This relies on the collaboration of three major engines within the BMS DXP:

However, having the right "map" is only the first step; more importantly, it's the depth and conversion power of the content after it's "seen." Technical SEO automation ensures that crawlers and AI can efficiently crawl the content, while full-link intelligence determines what they crawl and what users ultimately accomplish. This relies on the collaboration of three major engines within the BMS DXP:

1. Content Engine: Building an AI-friendly knowledge system: The platform supports building a structured knowledge base covering the entire scenario of "selection-comparison-principles-applications-purchasing," naturally adapting to the question-and-answer logic of AI (such as ChatGPT). By implementing a Topic Cluster strategy, the system systematically improves the professionalism and trustworthiness of the entire website.

2. Digital Asset Management (DAM): Ensuring the authority and consistency of content: Providing multi-cloud unified storage and cross-content reuse capabilities, ensuring that regardless of which AI platform references the content, the underlying product images, technical parameters, certification documents, and other materials are consistent and verifiable, strengthening the "Experience" and "Trust" aspects of E-E-A-T from the source.

3. E-commerce: Closing the loop from content to business opportunities: Quickly guiding user interest from AI or search results to inquiries or purchase actions. For example, referencing benchmark practices, when users browse similar product pages multiple times, intelligent pop-ups (such as online technical consultations, one-click price comparison tools) can be used for precise intervention, thereby guiding inquiry conversion.

Ultimately, this full-link closed loop manifests as a dynamically optimized process: high-quality, structured content produced by the content engine is efficiently pushed to search engines and AI through technical SEO automation, attracting targeted traffic; the traffic is enhanced by authoritative materials guaranteed by DAM on the website, deepening trust; and finally, the conversion engine captures sales leads, and customer behavior data is fed back into the content strategy, guiding the next round of content optimization. BMS DXP, as an integrated platform, automates all these processes to run in parallel in the background, reducing cumbersome technical costs to zero. This allows marketing teams to focus on creating content, while the system takes care of ensuring that the world (both humans and AI) sees and engages with that content.

IV. GEO Upgrade: Geolocation-Driven Personalized Marketing

After completing the intelligent transformation of the entire traditional search engine optimization (SEO) process, companies need to further broaden their digital marketing horizons. If the core of SEO is optimizing "ranking," then in today's world, where generative AI (such as ChatGPT, Claude, and Perplexity) is reshaping user search habits, the key to marketing success has quietly shifted to optimizing "whether it is recommended and cited by AI." This shift is closely tied to the evolution of ai search infrastructure, which underpins how generative AI retrieves, processes, and prioritizes content. This is the battlefield of Generative Engine Optimization (GEO), where mastery of ai search infrastructure becomes a decisive competitive edge.

(I) From "Being Searched" to "Being Cited": GEO Redefines Visibility with AI Search Infrastructure

After 2025, the purchasing research behavior of overseas B2B buyers has undergone profound changes, driven by advancements in ai search infrastructure. While traditional Google search still accounts for 50-60% of the basic traffic, generative AI search tools—powered by sophisticated ai search infrastructure—have rapidly penetrated the market, occupying 20-35% of the traffic share. This means that potential customers no longer simply enter keywords to obtain a list of links, but instead directly ask AI: "Recommend three reliable industrial air compressor suppliers for my North American automotive factory" or "Compare the energy consumption differences between screw and scroll compressors."

At this point, whether a company's content can be "seen," understood, and ultimately recommended and cited in the generated answers by AI directly determines whether the brand can gain an advantage in the new round of traffic distribution. This process is heavily influenced by how ai search infrastructure evaluates content credibility and relevance. However, the reality is harsh; most Chinese manufacturing companies are almost completely "invisible" in this area, largely due to a lack of alignment with ai search infrastructure requirements. The core pain points are:

- Lack of a content system compatible withai search infrastructure: ai search infrastructure prioritizes clearly structured professional content, verifiable industry knowledge, and continuously updated authoritative sources. Many company websites have scattered content, are not updated regularly, and lack systematic accumulation, making it difficult for ai search infrastructure to "trust" and cite them.

- Fragmented digital assets, unable to support ai search infrastructure needs: Product data, technical documents, application cases, images, and videos are scattered across different systems and cannot be coordinated, making it difficult for GEO to leverage ai search infrastructure for scaled visibility.

- AI mention rate is completely invisible and uncontrollable within ai search infrastructure: Companies generally cannot monitor the frequency, context, and comparison with competitors of their content being cited in ai search infrastructure, making targeted optimization impossible.

(II) BMS DXP: Building a GEO-Friendly "Content Hub" for AI Search Infrastructure

To address GEO challenges, single-point content creation tools are no longer effective; a platform-level capability that can unify content, assets, and conversion data management is essential to align with ai search infrastructure. The three core engines of BMS DXP are designed precisely for this purpose, providing robust core support for GEO by integrating seamlessly with ai search infrastructure:

- Content Engine: Building a structured industry knowledge base oriented towards AI question-answering logic—tailored to how ai search infrastructure parses and retrieves information. It supports the creation of modular content such as "selection guides," "product comparisons," "technical principles," and "application scenarios," naturally adapting to the citation needs of ai search infrastructure.

- Digital Asset Management (DAM): Achieving unified management and cross-content reuse of multi-cloud assets such as technical white papers, test reports, product videos, and application images. This ensures that any data cited by ai search infrastructure is traceable to its source, greatly strengthening content verifiability (a core element of the E-E-A-T standard) and earning the trust of ai search infrastructure.

- E-commerce and Conversion Engine: Seamlessly guiding the precise traffic brought by ai search infrastructure recommendations to the business opportunity conversion stage through embedded product parameters, inquiry forms, or configurators. This forms a short-chain closed loop of "cited by ai search infrastructure → deeply educated → generating inquiries."

- The essence of BMS DXP is to upgrade discrete content into systematic growth assets, allowing enterprises not only to produce content for Google but also to "feed" high-quality, highly credible information to ai search infrastructure, thereby enhancing their standing in AI-driven search results.

(III) HPDMC Practice: When Professional Content Becomes AI's "Default Source of Truth" via AI Search Infrastructure

Taking the overseas expansion practice of industrial equipment manufacturing company HPDMC as an example, the challenges it faces are highly representative: high industry professionalism, extremely fragmented search keywords, and a lack of presence in ai search infrastructure. With the help of BMS DXP, HPDMC implemented a systematic GEO upgrade aligned with ai search infrastructure requirements:

- Building a GEO-oriented content system for ai search infrastructure: Focusing on its core products (such as compressors), instead of simply listing parameters, it built a deep content cluster covering "material process principles," "selection guides for different application scenarios (such as automotive manufacturing, food and pharmaceuticals)," and "energy consumption and cost comparison analysis"—all structured to meet the parsing needs of ai search infrastructure.

- Unifying and activating digital assets for ai search infrastructure: The previously scattered technical white papers, third-party test reports, and product operation videos under real working conditions were unified and managed through the DAM center. These assets were then flexibly embedded into relevant articles as authoritative evidence, making them more recognizable and citable by ai search infrastructure.

- Achieving Integrated Content Distribution and Conversion for ai search infrastructure: This high-quality content is simultaneously optimized and pushed to websites and multilingual sites through the platform, ensuring consistency across touchpoints accessed by ai search infrastructure. When overseas engineers consult on professional issues using AI tools powered by ai search infrastructure, HPDMC's structured answers become a highly probable source of reference.

- The GEO effect is direct: multiple core technical content pieces are naturally cited by ai search infrastructure, leading to a significant increase in overseas inquiries from AI channels, and higher customer quality—because customers have already received initial education through answers generated byai search infrastructure before contacting sales. HPDMC's official website content has become an authoritative source of information cited by both industry partners and ai search infrastructure.

(IV) Implementation Path for GEO Upgrade: Aligning with AI Search Infrastructure for Growth

For B2B companies going global, embracing GEO is not about overturning the past, but a strategic upgrade based on SEO that aligns with the capabilities of ai search infrastructure. An effective implementation path should include:

- Mindset Shift: Think about GEO first, then implement SEO—with ai search infrastructure at the core. When planning content, the primary consideration should be "What questions will my target customers ask ChatGPT or other tools powered by ai search infrastructure?" Build answer-based content around five major scenarios: "Selection, Comparison, Principles, Applications, and Procurement" to match how ai search infrastructure processes queries.

- Platform Construction: Use BMS DXP to build Topic Clusters optimized for ai search infrastructure. Abandon the isolated pursuit of single-keyword rankings and instead pursue overall website professionalism. Through the platform, interlink core products, solutions, technical documents, and success cases to form a powerful semantic network—this is the foundation of SEO and the key to ai search infrastructure recognizing your brand as an "industry expert" in the GEO era.

- Unification and Collaboration: Achieve integration of content, assets, and data to support ai search infrastructure. The barriers between CMS, DAM, and CRM/e-commerce systems must be broken down. Only a unified content and data hub can ensure that the information retrieved by ai search infrastructure is the latest, consistent, and verifiable—this is the underlying capability to win the trust of ai search infrastructure.

- Marketing in the GEO era is a competition about "content authority" and "information verifiability" within ai search infrastructure. It requires a company's official website to evolve from a passive information display window into an active, highly trusted industry knowledge base and business opportunity conversion engine. Through the systematic support of BMS DXP, companies can systematically complete this upgrade, ensuring that in the traffic landscape reshaped by generative AI and ai search infrastructure, they are not only seen, but also trusted and chosen.

V. AI Automation: Exponential Improvement in Marketing Efficiency

When the technical infrastructure (Sitemap, llms.txt) and content production processes (Topic Cluster, DAM) are in place, the "last mile" of efficiency depends entirely on "automation." The core concept of the BMS Digital Experience Platform (BMS DXP) is to free people from complex, time-consuming, and error-prone manual operations, building marketing growth into a self-driving, self-optimizing automated workflow, ultimately achieving an exponential increase in marketing efficiency.

When the technical infrastructure (Sitemap, llms.txt) and content production processes (Topic Cluster, DAM) are in place, the "last mile" of efficiency depends entirely on "automation." The core concept of the BMS Digital Experience Platform (BMS DXP) is to free people from complex, time-consuming, and error-prone manual operations, building marketing growth into a self-driving, self-optimizing automated workflow, ultimately achieving an exponential increase in marketing efficiency.

This is not a vision of the future, but rather event-based, real-time automation that BMS DXP has already implemented in two key areas: technical SEO and the entire marketing process.

(I) Technical SEO Automation: A Qualitative Leap from "Daily Updates" to "Zero Time Lag"

The biggest bottleneck of traditional technology stacks is the uncontrollable time lag between "content publishing" and "being discovered by search engines/AI." This usually relies on manual operations or daily scheduled tasks, leading to wasted valuable marketing windows, delayed indexing of new information, and broken links continuously damaging website authority.

BMS DXP completely reconstructs this process through an event-driven mechanism, achieving three core automations:

1. Real-time automatic Sitemap updates: When marketing personnel click the "Publish" or "Modify" button in the backend, the system immediately injects the newly generated URL into the corresponding Sitemap file and updates the <lastmod> timestamp. This means that "content publishing" and "notifying search engines of the URL" are synchronized in seconds.

2. Automatic broken link cleanup: When a page is taken down or deleted, BMS DXP automatically removes it from the Sitemap, preventing search engine crawlers from crawling 404 pages, effectively protecting the overall website authority and maximizing the limited crawling budget.

3. Synchronized maintenance of llms.txt: Also based on event-driven mechanisms, when core content is updated, the system automatically extracts and updates the content summary, ensuring that the information fed to AI (such as GPTbot, ClaudeBot) is always the latest and most accurate.

This combination of automated actions ultimately reduces the time cost caused by technical complexities to zero. Marketing teams no longer need to worry about XML syntax, file splitting, or AI crawling protocols, and can focus on creating high-quality content, while BMS DXP ensures that the world (whether human search engines or AI agents) sees this content immediately.

(II) Marketing Process Automation: Building a Growth Flywheel of "Content → Assets → Conversion"

Higher efficiency doesn't just mean "faster," but also transforming content production into reusable, scalable, and directly growth-driving business assets. This is precisely the benefit brought by the synergistic automation of the BMS DXP's three core engines.

As emphasized earlier, over 70% of traffic depends on "content quality × content coverage." The automation logic of BMS DXP lies in creating carefully planned content once, which is then intelligently split, combined, and distributed by the system, ultimately leading to business goals:

• Experience Manager automatically builds and maintains a structured knowledge base and Topic Cluster for SEO and GEO, ensuring systematic content coverage.

• DAM automatically stores and manages technical documents, images, and videos, allowing them to be reused across multiple pieces of content and multiple sites, greatly strengthening the brand's professional authority in the eyes of both AI and users (E-E-A-T).

• E-commerce automatically facilitates the transition from content to products and triggers inquiry forms, forming a seamless conversion loop of "being seen → being educated → generating inquiries."

Through BMS DXP, the content lifecycle is no longer isolated publishing and updating, but is embedded in an automated growth system. Companies can systematically build and expand their online professional reputation and visibility at a significantly lower time and labor cost than traditional methods, whether facing traditional Google search or emerging AI question-answering engines. This is a true reflection of exponentially increased marketing efficiency: allowing limited marketing investment to achieve continuously amplified and predictable returns through automation leverage.

VI. Benchmark Case: HPDMC Compressor's Holistic Growth Practice

As mentioned several times earlier, HPDMC compressor is a pioneering example of integrating SEO and GEO, driven by the BMS digital experience platform, to achieve high-quality growth overseas. The core value of this case lies in the fact that it is not merely an isolated traffic generation technique, but a holistic growth practice encompassing professional website design, an AI-native content system, and an intelligent conversion path. Its official website has evolved from a simple information display window into a highly efficient growth engine that precisely reaches global engineering procurement decision-makers.

(I) The Cornerstone: A B2B Professional Website Designed for "Engineering Thinking"

The success of the HPDMC website is rooted in its profound understanding of industrial B2B procurement decision-making logic. The website is built around the decision-making journey of professional buyers (engineers), possessing distinct benchmark characteristics:

• Clear and Authentic Professional Presentation: The website navigation and product classification strictly follow the thinking habits of engineers, such as classification by application scenarios and technical parameters, rather than internal corporate structure. Visually, it uses high-definition real-shot images and working condition videos to establish a realistic and credible professional image, far superior to overly beautified renderings.

• Structured and Intelligent Parameter Presentation: Technical parameters are the cornerstone of engineers' decision-making. The website not only provides complete and accurate basic parameters but also utilizes advanced "parameter matrices" or visualization solutions, such as modules that support dynamic comparison of key parameters across multiple products, and technologies that transform complex parameters into interactive charts. This greatly improves the efficiency of information acquisition for professional users.

• Seamlessly Integrated Conversion and Support System: The website is dedicated to shortening the decision-making path. It integrates a dynamic quotation system or intelligent inquiry forms, allowing customers to configure parameters online, and the system generates customized quotations in seconds based on company data. At the same time, a technical consultation entry point is prominently displayed on the product page, providing instant professional support through a combination of AI chatbots and human customer service.

This user-centric design ensures that traffic is effectively captured and trusted, laying the foundation for subsequent high-quality conversions.

(II) Core: Deeply Supporting GEO's AI-Native Content System

The strategic core of the HPDMC content system is the execution of the GEO (Generative Engine Optimization) strategy, which aims to "let the brand be spoken by AI." Its content production and distribution revolve around becoming the "default source of information" for AI:

1. Strategic Framework and Closed-Loop Operation: The website has established a clear GEO execution framework, including designing content templates based on GEO principles (such as QA-type and comparison-type content), and regularly updating and publishing content for AI to reference, forming an "AI-indexed content pool." At the same time, effect monitoring and A/B testing are conducted by tracking key indicators such as Prompt mention rate and AI recommendation frequency, achieving a quarterly closed-loop strategy iteration.

2. Restructuring of Product Descriptions: From Marketing Jargon to Semantic Answers: Traditional product descriptions have been completely restructured to match the retrieval logic of generative AI.

• Semantic Richness and Intent Matching: Ambiguous marketing jargon is abandoned in favor of natural, specific language to describe parameters, scenarios, and user benefits, directly and structurally answering complete questions that users might ask AI.

• Structured Data (Schema) and Authoritative Proof: Complete Schema markup (product, reviews, etc.) is added to products, clearly defining key attributes using AI's common language. Simultaneously, social proof such as user reviews and expert evaluations are actively integrated to build a cross-trust network, aligning with the "all-domain trust asset layout" strategy.

3. Semantic Structure of Technical Documents: Becoming an Authoritative Source Library for AI: To ensure that complex technical information is accurately understood by AI, technical documents have undergone deep semantic optimization.

• Natural Language Processing (NLP) technology is applied to automatically extract key information such as technical specifications and operating procedures, reorganizing and classifying content to improve machine readability.

• By building hierarchical indexes and knowledge graph indexes, document units are semantically linked, forming a graph structure. This helps AI more accurately identify and associate relevant content, ensuring the professionalism and consistency of generated answers.

This content system ensures that HPDMC's professional content on compressor technology, selection, and applications can be frequently cited by generative AI (such as ChatGPT, DeepSeek), thereby directly capturing user attention in AI-generated answers, achieving a paradigm shift from "website traffic generation" to "answer penetration."

(III) Guarantee: Robust and Efficient Technical SEO Foundation

Although we cannot obtain all the technical details, an industry benchmark website like HPDMC inevitably follows rigorous best practices in its SEO technical architecture to ensure that content can be effectively crawled and indexed by traditional search engines, which is an important source of GEO traffic. This typically includes:

• Clear and flat page structure: Directory levels do not exceed 3 levels, facilitating search engine crawling and user navigation, and breadcrumb navigation is used to enhance structural understanding.

• Precise meta tags (TDK) and semantic HTML: Optimizing titles and descriptions for core keywords such as "reciprocating compressor" and "parameter settings," and using appropriate H1-H6 tags to build content hierarchy.

• Search engine-friendly technical implementation: Creating and submitting an XML sitemap, controlling page size to ensure loading speed, and ensuring clean code to avoid content not being crawled due to Flash or complex JavaScript.

(IV) Results: Measurable Growth and Optimization Feedback Loop

HPDMC's practices are not just theoretical; their effectiveness is verified through a set of quantifiable metrics, driving continuous optimization:

• Traffic and engagement: Monitoring website visits (PV/UV), average visit duration, and page views to assess user engagement and content appeal. Bounce rate, especially the homepage bounce rate, is crucial for testing first impressions and content relevance.

• Source and keyword performance: Analyzing the proportion of traffic from search engines, tracking the ranking of core product keywords (such as "screw compressor cfm") and brand keywords "HPDMC," and measuring their visibility in professional searches. Their high-frequency exposure on B2B platforms like Alibaba also constitutes an important external traffic source matrix.

• Core conversion funnel: The most critical metric focuses on the conversion rate, i.e., the proportion of visitors who complete target actions such as inquiries and form submissions. By analyzing reach (successful landing page loads) and second-click rate (user interaction after the first click), bottlenecks in the click-to-inquiry path can be precisely identified. Information suggests that their "data conversion results are very good," implying excellent inquiry conversion data.

• User experience and path optimization: Using data to track user time spent on key product pages and the number of pages viewed to determine content appeal. By analyzing mainstream browsing paths (from the homepage to product details, and then to case studies and inquiry pages), internal link guidance can be optimized, and intelligent tools can be used to intervene at critical decision points (such as when users browse similar products for the 3rd to 5th time) to improve conversion efficiency.

(V) Summary: The Holistic Logic from "Information Platform" to "Growth Hub"

The HPDMC compressor case clearly demonstrates a replicable path: a professional B2B company builds a foundation of trust by creating an engineer-friendly website, deploys an AI-native structured content system to deeply penetrate generative search traffic, and achieves closed-loop growth through robust technical SEO and data-driven conversion funnel optimization. Its website has evolved into a digital growth hub integrating brand display, professional education, intelligent customer acquisition, and sales collaboration. This not only validates the effectiveness of the SEO and GEO integration strategy advocated by the BMS platform, but also provides a highly valuable practical paradigm for how industrial manufacturing companies can achieve high-quality overseas growth in the new AI search era.

VII. Enterprise-Level Solutions Under the EAAT Standard

The HPDMC benchmark case mentioned earlier clearly demonstrates the tangible growth returns of building a content system around Experience, Expertise, Authoritativeness, and Trustworthiness. However, for most enterprises, single, sporadic content successes are difficult to replicate. The real challenge lies in: how to transform the abstract standard of EAAT "content quality" into a stable output system that is scalable, automatically monitored, and collaboratively implemented across departments?

This is precisely the core value of BMS DXP as an enterprise-level solution – it is not just another content writing tool, but a digital infrastructure that internalizes the EAAT standard into platform capabilities and drives systemic growth.

(I) Pillar One: Platform-Based Content Hub, Scaling "Expertise" and "Authoritativeness"

The primary challenge faced by enterprises is fragmented content production. The marketing department creates blogs, the technical department produces white papers, and the sales team accumulates case studies. These dispersed content assets cannot form a cohesive force, resulting in weak overall website expertise and authoritativeness.

BMS DXP, through its dual engines of Content Management Core (Experience Manager) and Digital Asset Management Core (DAM), establishes a unified content hub for enterprises:

• Structured Industry Knowledge Base: The platform supports the creation of Topic Clusters oriented towards AI search logic. For example, around the core theme of "industrial compressors," the system systematically produces related content such as "selection guides," "principle analysis," "maintenance manuals," and "application cases," forming a semantic network. This not only enhances the overall professional depth of the website but also directly matches the information retrieval patterns of AI tools like ChatGPT for answering complex questions, making enterprise content the preferred source of information for AI.

• Asset Unification and Reuse: Digital assets such as technical white papers, high-definition product images, working condition videos, and third-party test reports are centrally managed through DAM. These assets can be flexibly used in any relevant product pages, blogs, or solutions, providing visual and verifiable support for arguments, greatly strengthening the "verifiability" (Trustworthiness) in E-E-A-T.

(II) Pillar Two: Fully Automated Technical Foundation, Ensuring Real-Time "Experience" and "Trustworthiness"

The EAAT standard emphasizes continuous content updates and timeliness. Traditional models relying on manual maintenance of technical SEO and content distribution fail to meet the demands of the AI era in terms of both speed and accuracy.

BMS DXP fully automates underlying technical processes, allowing marketing teams to focus on the content itself, while the platform ensures it gets "seen":

• Intelligent Sitemap Management: The platform automatically handles the hierarchy, updates, and broken link cleanup of XML Sitemaps. Once content is published, its URL is immediately injected into the Sitemap and the <lastmod> timestamp is updated in real time, ensuring that search engines and AI crawlers can discover the latest and most relevant content as quickly as possible, maximizing crawling budget. This is a technical prerequisite for maintaining "freshness" and "credibility."

• AI-Ready Infrastructure: BMS DXP automatically generates and maintains the llms.txt file. This allows businesses to define which high-value, high-authority core content (such as product technical specifications and authoritative white papers) should be prioritized for AI agents like GPTbot and ClaudeBot. This is equivalent to creating a dedicated high-speed channel for businesses to access AI-generated answers, systematically improving brand citation rates and visibility in AI search.

(III) Pillar Three: Closed-Loop Growth Engine, Transforming "Authoritative Content" into "Business Value"

The ultimate goal of building EAAT is not merely to gain traffic or citations, but to achieve business conversion. Many companies have a disconnect between their content and conversion paths, leading to a loss of traffic value.

BMS DXP integrates an e-commerce and conversion engine, achieving a seamless connection from content trust to business opportunity capture:

• Content-Product-Inquiry Integration: While displaying in-depth technical articles (demonstrating Expertise) or application cases (demonstrating Experience), the page can seamlessly embed relevant product modules or intelligent inquiry forms. When users are convinced by the professionalism of the content, their purchase or inquiry intent can be immediately captured, forming a short path of "educated → trusted → converted."

• Data-Driven Optimization Closed Loop: The platform's capabilities allow businesses to track not only how much traffic content generates, but also to analyze which content generates high-quality inquiries, and the differences in performance between traditional search and AI search. This provides precise data support for continuously optimizing content strategies and improving the return on investment of EAAT assets.

(IV) Summary: From "Tool Stacking" to "Capability Internalization"

In today's era where generative AI is reshaping the search ecosystem, what businesses need is not more single-point SEO or content tools, but an integrated solution that can platformize, automate, and create a closed loop for the EAAT (Expertise, Authoritativeness, Trustworthiness, and Experience) standards.

BMS DXP is precisely this kind of enterprise-level solution. Through its three-in-one architecture of a content hub, automated technology foundation, and closed-loop conversion engine, it helps businesses systematically transform fragmented content efforts into sustainable digital assets that drive growth, ensuring that businesses can consistently "be seen, be cited, be trusted, and be chosen" in both human and AI search environments.

FAQ

1. What are the core differences between BMS DXP and traditional enterprise website systems?

BMS DXP is not just a website building tool, but a digital experience infrastructure that integrates content, data, AI, and global growth capabilities.

2. Is BMS DXP suitable for B2B manufacturing companies?

Yes, its architecture is specifically designed for multilingual content management, overseas customer acquisition, and complex business processes.

3. How does BMS DXP support SEO and GEO?

Through structured content, data governance, and AI-ready design, it is compatible with both search engines and generative AI platforms.

4. Does the system support high concurrency and global access?

Yes, it leverages microservice architecture, CDN, caching, and message queues to achieve high performance and global scalability.

5. Is BMS DXP easy to extend with additional features?

Yes, the modular microservice design allows for flexible expansion and continuous iteration based on business needs.

Want to know more about our products?

With years serving Fortune 500 clients, we offer flexible solutions and integrated implementation.

Xiaohongshu

WeChat Channels

Douyin