Mon-Fri, 9:00-17:00 (Beijing Time, UTC+8)

Mon-Fri, 9:00-17:00 (Beijing Time, UTC+8)Intelligent Management

Supports full-text search, tag-based search, category-based search, AI-powered multimodal search, and more to quickly locate required assets; enables real-time collaboration and synchronization across different regions and teams, enhancing team collaboration efficiency.

Core Advantages

Intelligent Search System

Supports full-text, tag-based, category-based, and AI-powered multimodal search for rapid asset discovery.

Real-time Collaboration and Synchronization

Enables real-time editing and synchronization across geographically distributed teams to enhance collaboration efficiency.

AI Multimodal Recognition

Automatically identifies content in images, videos, and audio files, generates tags, and optimizes search.

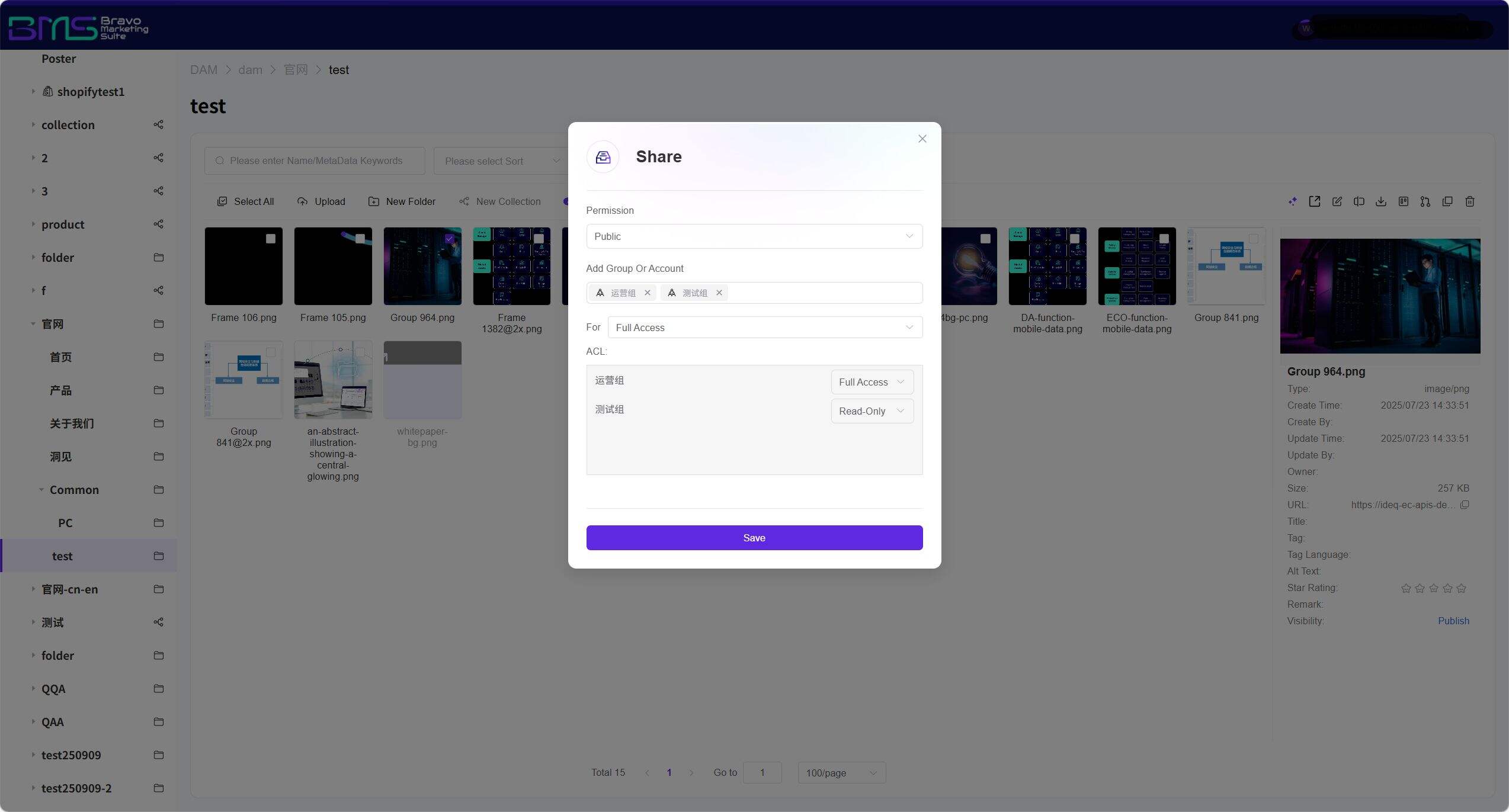

Intelligent Permission Management

Supports configurable permissions to prevent unauthorized operations and ensure asset security.

Intelligent Management: AI-Powered Search and Collaboration Revolution for DAM Digital Asset Management Systems

What Is Intelligent DAM Management? Why Do Enterprises Need It?

Intelligent DAM (Digital Asset Management) management refers to a modern solution that leverages artificial intelligence technologies to perform intelligent search, automatic tagging, real-time collaboration, and secure governance on enterprise unstructured data—including images, videos, and documents.

Traditional folder-based management can no longer meet the demands of massive-asset retrieval and global collaboration. Intelligent DAM management has thus become the core infrastructure for enterprise content digital transformation. From "managing assets" to "activating assets", our DAM system transcends a simple storage tool and evolves into a digital asset hub integrating intelligent search, seamless collaboration, deep association, trusted traceability, and proactive defense. Through five core advantages, it redefines the efficiency of digital asset management—enabling enterprises to shift from passive asset management to proactive asset activation, ensuring every digital asset delivers maximum business value at the right time and in the right way.

Five Core Advantages: Redefining Digital Asset Management Efficiency

I. AI-Powered Multimodal Search: Breaking Format Barriers for Sub-Second Asset Retrieval

Cross-modal asset search supporting text, images, video, and voice

Core Capabilities:

• Full-text semantic search: Uses NLP technology to understand document content, video subtitles, and printed text within images—breaking free from filename limitations.

• Image-to-image / video-to-video search: Employs computer vision to extract color, composition, and stylistic features; supports uploading reference images to locate visually similar assets.

• Voice-command search: Enables natural-language voice input on mobile devices; ASR engine automatically converts speech into structured queries.

• Cross-modal semantic association: Entering emotional keywords (e.g., "cheerful, tech-savvy") simultaneously retrieves matching background music, video clips, and graphic assets.

Our DAM system overcomes the limitations of traditional keyword-only search by leveraging AI multimodal technologies to unify retrieval across all formats—text, images, video, and voice. Whether users input a descriptive phrase, upload a reference image, or speak a query aloud, the system precisely interprets intent and locates target assets within seconds—even from massive repositories. This reduces asset discovery time from hours to seconds, empowers non-technical users to navigate complex asset libraries effortlessly, and significantly boosts overall team productivity—eliminating the inefficient “needle-in-a-haystack” search paradigm.

II. Global Real-Time Collaboration: Seamless Cross-Temporal Coordination

Seamless team collaboration across regions and time zones

Core Capabilities:

• Conflict-free concurrent editing: Supports simultaneous editing, annotation, etc., without file locking.

• Millisecond-level data synchronization: Edge computing + cloud sync architecture ensures real-time consistency across nodes in New York, London, Singapore, Shanghai, etc.

• Enables seamless team collaboration across regions and time zones—freeing creativity and decision-making from geographic constraints.

The system embeds a globally distributed collaboration engine, allowing team members worldwide to concurrently edit, annotate, and comment on the same asset online. All operations synchronize instantly, versions auto-update, and issues such as “repeated file transfers” and “version confusion” are completely eliminated. Whether designers in Beijing, marketing staff in New York, or project managers in Tokyo—they collaborate seamlessly within the same digital workspace, accelerating creative execution and project delivery by an order of magnitude.

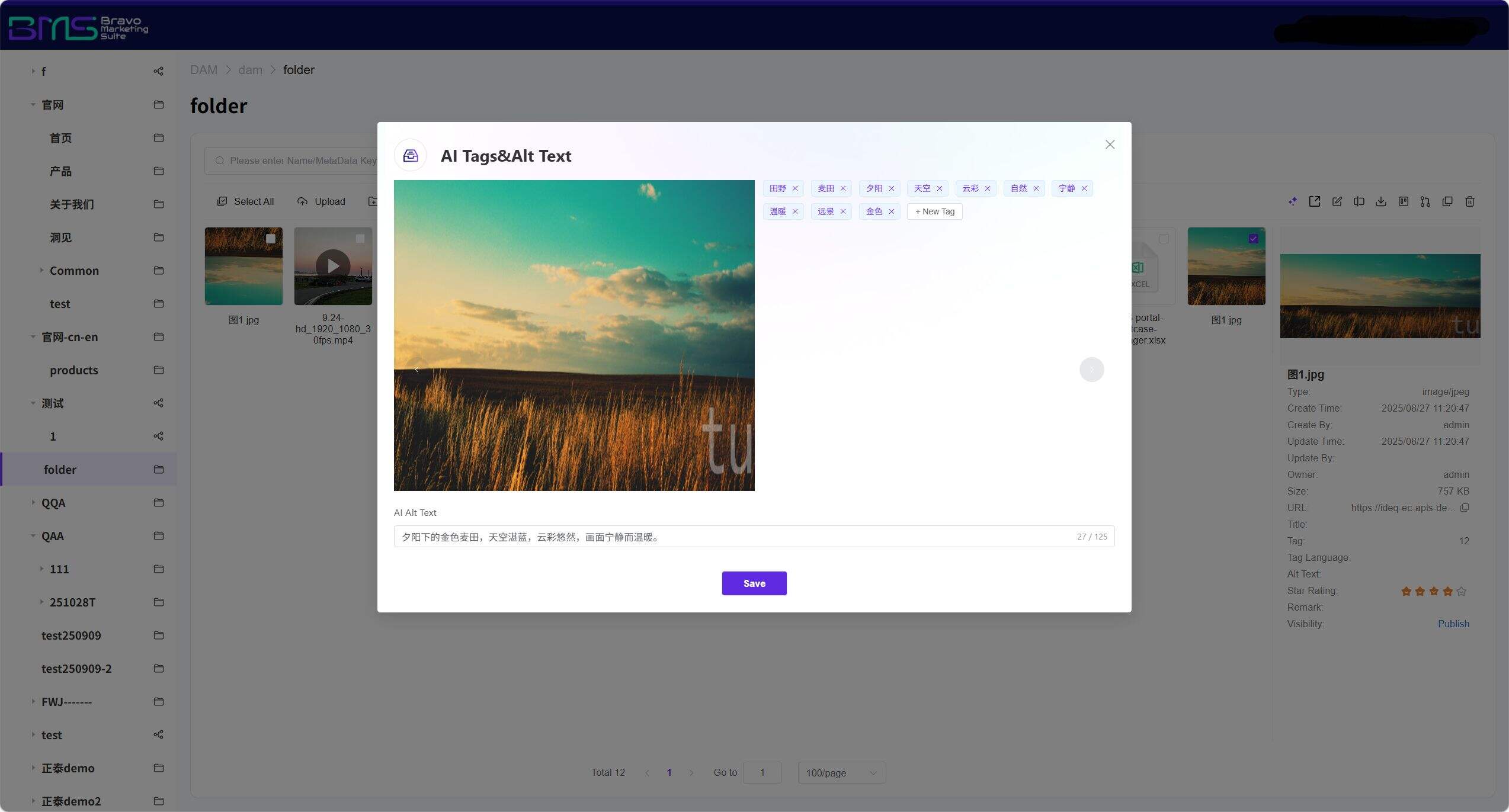

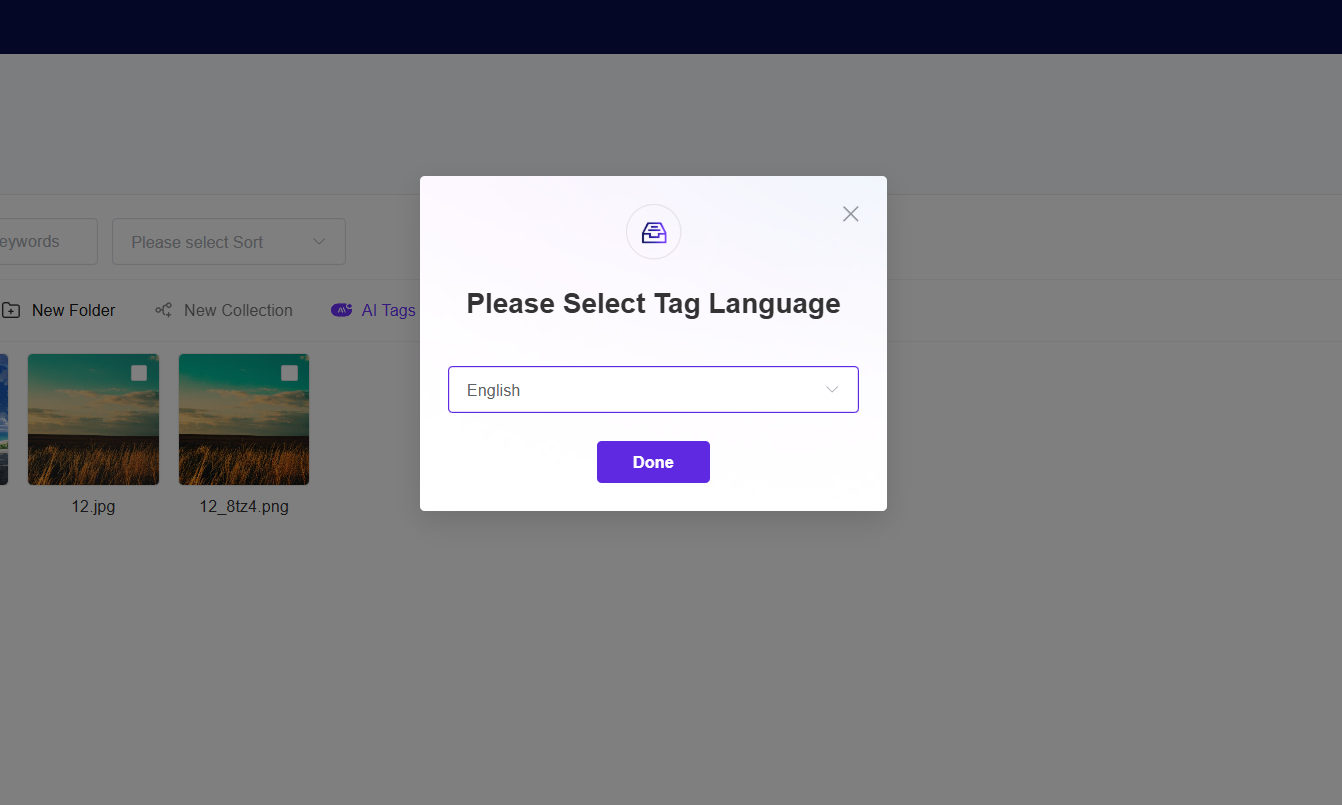

III. Intelligent Tagging System: AI-Automated Tagging and Dynamic Knowledge Graph Construction

AI-powered automated tagging and dynamic knowledge graphs enable assets to “speak for themselves,” achieving deep association and intelligent recommendations.

Core Capabilities:

• Four-dimensional automated tagging:

– Visual tags (objects/scenes/colors/composition, supporting Pantone-level color accuracy)

– Emotional tags (style recognition: minimalist/luxurious/vibrant/serene)

– Business tags (Campaign ID/Product SKU/Channel specifications)

– Compliance tags (license expiration date/commercial usage rights/sensitive element detection)

• Dynamic knowledge graph: Tags form a "product-scene-style-channel" interconnection network; related tags are intelligently recommended during searches.

• Continuous learning mechanism: Analyzes user behavior to automatically optimize tag weights, building enterprise-specific visual semantic understanding.

• Multilingual tagging: Built-in multilingual processing enables intelligent tagging in major languages including Chinese and English.

Accuracy Metrics:

AI tag accuracy for general scenarios reaches 94%; industry-specific models (e.g., medical imaging, industrial components, fashion fabrics) can be rapidly customized using only 50–100 sample images.

The system employs computer vision and NLP technologies to automatically identify key information—including content, scene, people, and sentiment—upon asset ingestion, generating precise tags. These tags do not exist in isolation but collectively form a dynamic knowledge graph, logically linking seemingly unrelated assets. When searching for a “summer beach” image, the system not only returns results but also intelligently recommends related assets—such as “beach activity videos” and “summer marketing copy”—maximizing asset reuse value.

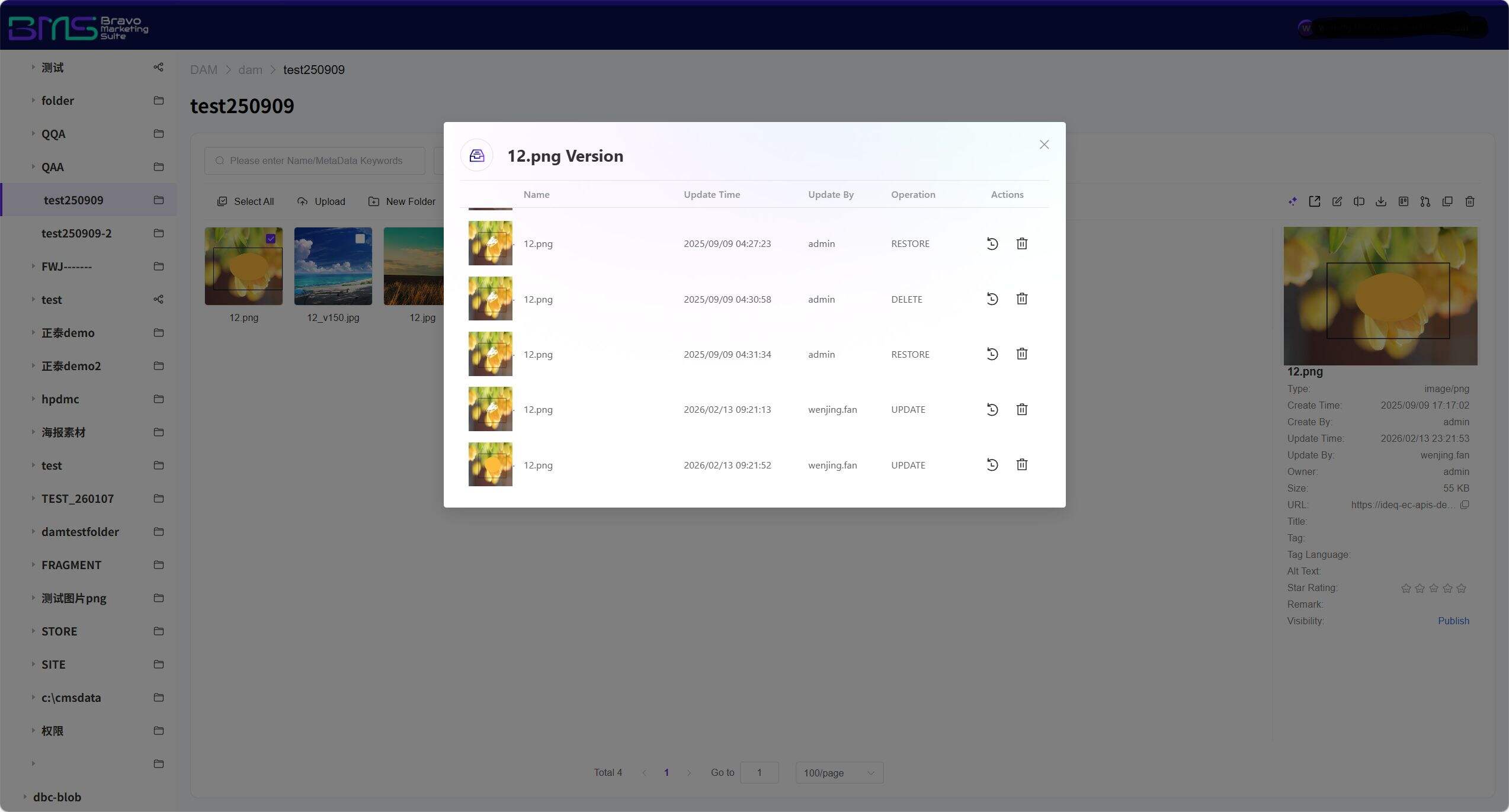

IV. Full-Lifecycle Version Control: Tamper-Proof Asset Evolution Archives

Core Capabilities:

• Atomic-level version snapshots: Records editor, timestamp, and specific editing actions.

• One-click time travel: Instantly roll back to any historical version; associated derivative files restore synchronously.

Our DAM system establishes complete full-lifecycle archives for each digital asset—from creation and modification through review to final publication—precisely recording and preserving every version change. These records employ blockchain-grade immutability technology, guaranteeing authenticity and integrity of asset history. This not only provides robust support for enterprise compliance audits but also enables teams to clearly trace asset evolution when reviewing creative iterations or troubleshooting issues—preventing losses caused by version loss or confusion.

V. Dynamic Permissions & AI Security Governance: Granular Access Control Down to Individual Images

Core Capabilities:

• Permission management: Fine-grained configuration down to individual images and specific editing operations.

• AI content security inspection: Automatically scans uploads for unauthorized fonts, competitor elements, sensitive individuals, profanity, image content, religious/cultural sensitivities, and copyright compliance—triggering blocking, manual review, or anonymization.

• UEBA-based risk awareness: Monitors anomalous download behaviors (e.g., bulk downloads outside work hours, rapid cross-regional logins), triggering step-up authentication in real time.

The system adopts a dynamic permission management model, supporting multi-tiered access control—from departments and projects down to individual images and files—ensuring “those who should see it can; those who shouldn’t cannot.” Meanwhile, the AI security engine continuously monitors the asset repository, automatically identifying sensitive content and detecting abnormal access behaviors, issuing immediate alerts and blocks upon risk detection. This dual mechanism of “proactive defense + granular control” constructs an impregnable security barrier for enterprise core digital assets.

Frequently Asked Questions (FAQ):

Q1: Which file formats does the DAM system’s AI multimodal search specifically support? How is “multimodal” defined?

A1: Supports 200+ formats, including JPG/PNG/PSD/AI (images), MP4/MOV/AVI (video), PDF/DOCX/PPTX (documents), OBJ/FBX (3D models), and WAV/MP3 (audio). “Multimodal” means the system simultaneously understands three information modalities: text, visual, and voice—enabling text queries to retrieve video content, uploaded images to find stylistically similar assets, and voice commands to locate specific assets. Specialized formats may be extended via custom parsers.

Q2: For multinational enterprises using DAM real-time collaboration, how does the system comply with varying national data regulations?

A2: The system supports Data Residency configuration: EU user data is stored exclusively in Frankfurt nodes; China user data resides in Beijing/Shanghai nodes—physically isolated and fully compliant with local regulations.

Q3: What is the accuracy of AI-generated tags? Can domain-specific content (e.g., pharmaceutical, industrial) be recognized?

A3: General-scenario tag accuracy reaches 94%. For vertical domains, we apply Few-shot Learning: Uploading just 50–100 annotated samples trains a dedicated model—supporting specialized recognition such as medical imaging (CT/MRI), industrial parts (screw/bearing models), and fashion fabrics (material/weave). All AI tags allow manual correction, with corrected data immediately fed back to optimize the model—creating a "continuously improving accuracy" positive feedback loop. This establishes a virtuous cycle of “AI-assisted + human-calibrated” tagging.

Q4: Does AI multimodal search support multilingual retrieval? Our business spans multiple global markets.

A4: Yes. The system features built-in multilingual processing capabilities, supporting cross-modal retrieval in major languages including Chinese and English—and can be extended to additional languages per enterprise requirements, meeting global asset management needs.

Q5: Does full-lifecycle version control support asset rollback? Can I recover from accidental edits?

A5: Fully supported. Every version is independently restorable; users may roll back assets to any historical version at any time. All rollback operations are logged, ensuring full auditability and traceability.

Summary: Why Is Intelligent DAM Management Needed Now?

In the era of generative AI and content explosion, enterprises face dual challenges: exponential growth in asset volume and geometric escalation in collaboration complexity. Intelligent DAM management addresses these via:

• AI multimodal search to resolve the “can’t find it” problem

• Global real-time collaboration to resolve the “hard to collaborate” problem

• Intelligent tagging system to resolve the “hard to manage” problem

• Version control & permission management to resolve the “high-risk” problem

Ultimately enabling enterprises to achieve a step-change in content productivity, transforming digital assets from cost centers into growth engines.

Next Step: Schedule a demonstration of the Intelligent DAM Management solution to receive an industry-specific proposal.

Want to know more about our products?

With years serving Fortune 500 clients, we offer flexible solutions and integrated implementation.

Xiaohongshu

WeChat Channels

Douyin